Executive Overview

Our goal is to provide a view of the health and value of knowledge offerings across all relevant channels, or put another way: How well are we doing at connecting customers to content?

Why Measure Success With Self-Service?

We generally refer to the benefits of KCS in three stages:

- Operational efficiency

- Customer success with self-service

- Improved products & services

Operational efficiency is relatively easy to measure. After implementing KCS, it doesn't take long to see improved resolution times, improved first call resolution, and/or reduced escalations. But after those gains in efficiency are achieved, it becomes how you run your business. The incremental value has already been realized.

Success with self-service is a mid-term benefit of KCS. It takes some time to publish and improve articles that are findable and usable by an external audience. For years, Consortium Members struggled with quantifying the benefits realized by improved success with self-service. We have lots of anecdotal evidence that customers are happier when they find answers without opening a case and Knowledge Workers are happier when they get to solve new problems instead of repeating known answers. However, these things often do not help justify an investment in knowledge and self-service.

Justify Your Investment in Knowledge

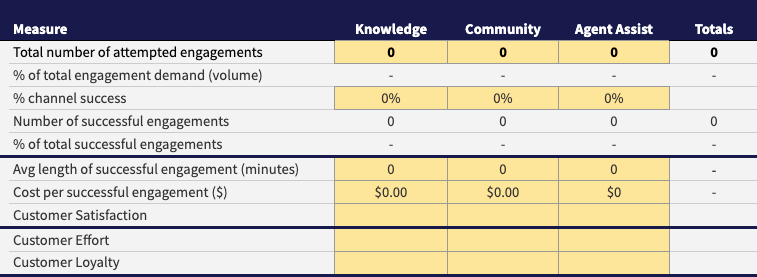

The Service Engagement Measures Spreadsheet is intended to help justify your transformation investment. It demonstrates ROI for your KCS program, community, and self-service program investments, including staff and your tools. It also offers a way to understand customer health and engagement. This may require an investment in analytics, or at least connecting with folks who have the data you need.

Delivering knowledge through self-service and communities is about multiplying reach! We can leverage knowledge captured during assisted interactions at a significantly lower cost. How do we know we are getting full value out of our knowledge investments?

Benchmark Against Yourself

Measuring success with self-service and communities becomes very complex, very quickly. Because of the variables involved, the only meaningful baseline is against your own performance. This means you need to define your scope in a way that makes sense in your environment. Judgment is required!

This work was developed and tested over the course of several years with a group of Consortium Members representing 18 widely varied companies - including small, medium, and large enterprises, both publicly and privately traded, focused on both business and consumer audiences, and at multiple stages of KCS maturity. The one constant in our initial findings was: these numbers are only meaningful as part of the larger environment of an organization and there is little value in attempting to benchmark against other organizations. Our recommendation is to gather data monthly, and report out quarterly. After you have a completed spreadsheet and you start to get a sense of the total demand for support outside the assisted model, it is scary to imagine what would happen if you turned off self-service!

See the Measurement Matters v6 paper for more benefits offered by a mature KCS implementation.