Assessing Success & Contribution

Measures will be unique to each company, organization, program plan, and individual. It is an understatement to say measures are always a challenge, even for systems we fully understand - let alone an emerging business practice. The design techniques around measures serve as guidance and ideas, not a prescriptive list of requirements.

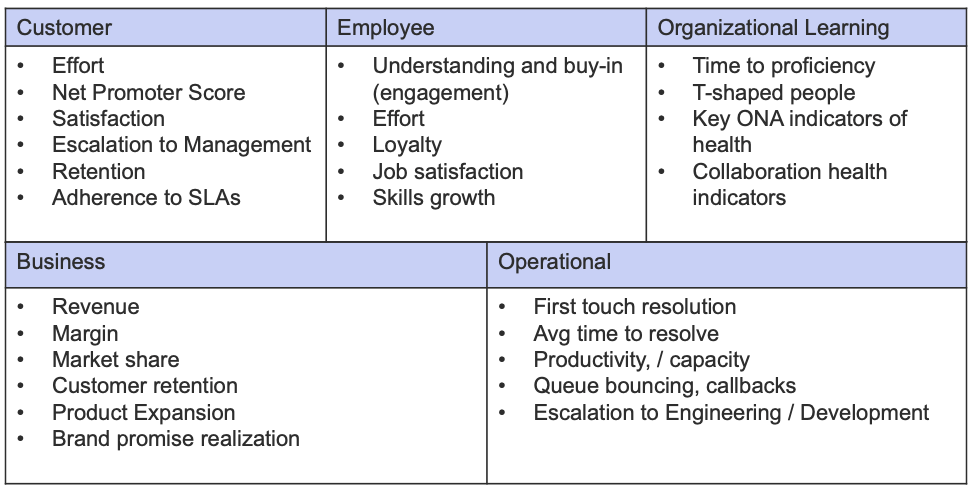

Assessment Perspectives

The Practice of Recognize calls out three distinct perspectives for consideration:

Business Outcomes

Intelligent Swarming does not change the highest-level goals of the organization. We are still interested in reducing customer effort, and increasing customer success, productivity, and loyalty, as well as the speed, consistency, and accuracy of our response to requests.

Intelligent Swarming does change how we deliver services to reach the desired outcomes. The definition of 'success' and how we measure it varies from company to company. Designing the indicators of success takes careful thought, but for many service organizations, we have a great starting point and collection of possible measures.

Like so many organizational change initiatives, the impacts of Intelligent Swarming are seen as delayed gratification. Some benefits to the knowledge workers and customers may become apparent within weeks, but impact to top level business outcomes may take months or even a year. Being clear on short, mid, and long term outcomes will ensure appropriate expectations.

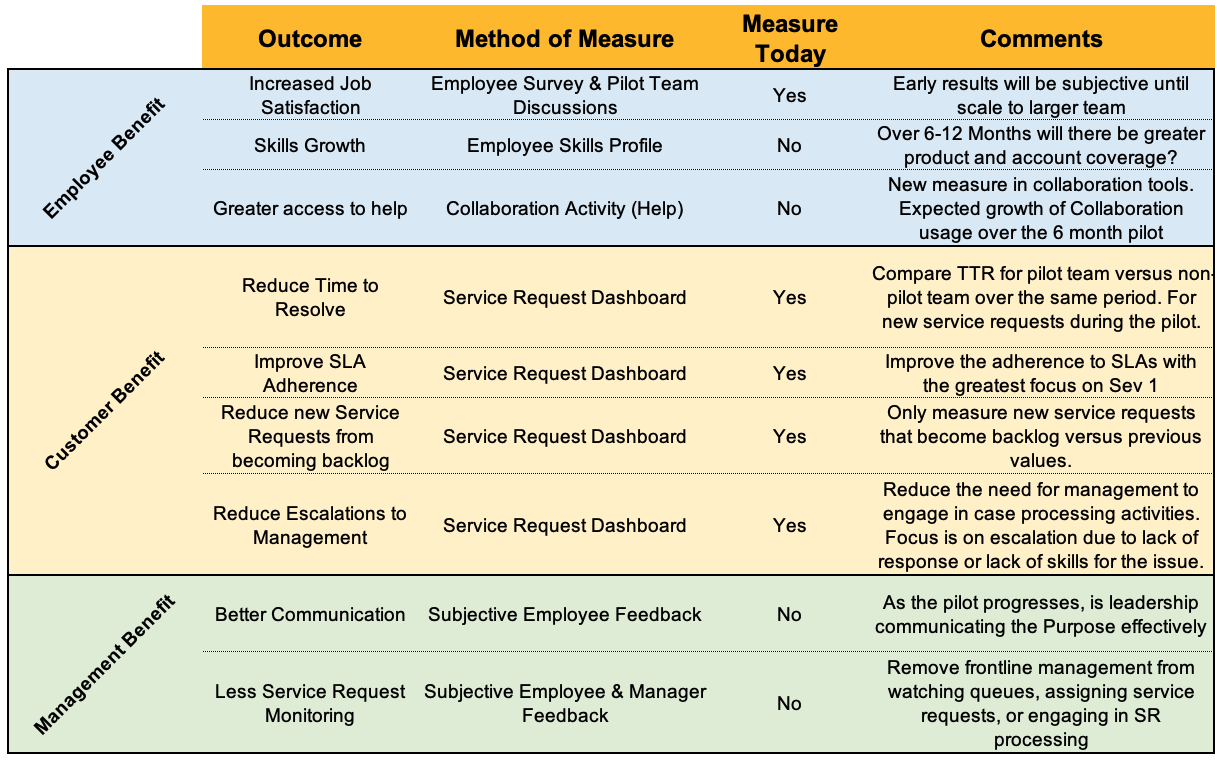

Simplified Example

From a Consortium Member's "Business Outcome Benefits" for their pilot:

- The design team and Executive Sponsor of the program (SVP of the Organization) agreed that objective and subjective measures are indicators of success.

- No goals were placed on these measures as they are indicators of a change, not a driver of the change.

- Some traditional measures such as Customer Satisfaction or Net Promoter score are absent. This organization did not have enough return on these measures to be meaningful for the pilot team.

Intelligent Swarming Health

To assess the health of the Intelligent Swarming model, there are a number of leading indicators or activities that are interesting to monitor. These measures should not have goals, but trends in the activities can be an early indicator of the desired behaviors. In fact, if we put goals on any of these activities, the trend becomes meaningless as an indicator of behavior. Do not put goals on activities!

- Frequency of use of knowledge worker’s expertise (how often do we go to an individual?)

- How long to ask for help (case open to raise hand)

- Too long? Too quick?

- Frequency of request for help from a specific person, and frequency of requests for help from any one individual compared to the average for the team

- Time to respond: how long for individual to respond

- Frequency of response by individual

- Unanswered requests

- Feedback from the requestor of assistance about the responder

- Feedback from responder about the requestor

- Frequency of unsolicited offers of help

- Knowledge articles linked, improved, or created as a result of collaboration

- Updating or linking to Agile stories

- Participation in daily standup, posing good questions, and offering help

- Accuracy of the Visibility Engine to make relevant connections

Individual & Team Contributions

Contribution assessment covers far more than just the new measures that we have for Intelligent Swarming. However, Intelligent Swarming is a change agent that forces organizations to rethink how measures are used, what they mean, and what kind of 'work' contributes to overall success. Assessing contribution (value creation) in a highly collaborative environment is challenging, in part because it is dramatically different from how we have assessed the contribution of individuals in the past. The traditional transaction-based model of counting activity has conditioned knowledge workers and managers to focus on numbers rather than value. Our goal is to assess who is creating value through contribution, not who looks busy on paper. Value is abstract and therefore not countable in any discrete way.

Consider the following scenario:

Two knowledge workers are working on a request. They have spent the last two hours trying to understand the issue and doing analysis and research. A colleague overhears the conversation (or in a swarming environment, has visibility to the open issue because they have experience with the topic at hand) and makes a suggestion on something to look into. Following the recommendation of their colleague, the two knowledge workers solve the issue in the next 10 minutes.

How do we assess individual contribution in a scenario like this? It cannot be based on work minutes, or the number of events. Did the two knowledge workers wait too long to seek help? Did the work they did in analyzing a difficult situation enable their colleague to easily offer a helpful suggestion? What is the value of problem determination versus problem resolution?

As we have discussed, Intelligent Swarming is based on understanding that every individual has different skills and levels of competency using those skills. There is no one measure that will indicate the overall contribution and value someone is delivering. This requires a detailed understanding of all of the skills contributing to our success, and a detailed understanding of the overall contribution of the team to the business outcomes.

Based on our defined organizational outcomes, skills, and job requirements, we can create a multifaceted view of contribution against key areas. This also serves as a great tool for 'coach managers' to help people grow in their roles and functions.

Design Considerations for Contribution Measures

Developing a measurement model for Contribution (value creation) serves multiple purposes.

- As individuals, we want to know how we are doing and how the team is doing.

- As managers, we want to know how the team is doing, areas for improvement, and gaps in our ability to contribute to success.

The KCS v6 Practices Guide covers the idea of triangulation, which is relevant to Intelligent Swarming as well. Because the creation of value cannot be directly measured or counted—value is intangible— we believe the best way to assess the creation of value is through a process of triangulation. As with GPS (global positioning system) devices that calculate our location on the earth based on input from multiple satellites, an effective performance assessment model incorporates multiple views to assess the creation of value.

An organization may have the most sophisticated measurement system possible, but it is only effective if managers know how to interpret the measures and how to have effective conversations with employees that influence behavior. Performance assessment and the creation of value is fundamentally about behavior and decision making, not about the numbers.

When designing Contribution Measures, keep in mind:

- We cannot depend on any one measure to determine if someone is contributing.

- Every individual and team will look different, since contributing to success can take many forms.

- Indicators should be used in the context of the environment.

- Trends in activities are interesting over time, but putting goals on those activities make those trends meaningless.

- When discussing measures, we want to focus on desired behavior and not the number itself.

- DO NOT PUT GOALS ON ACTIVITIES.

Contribution Measures: An Example

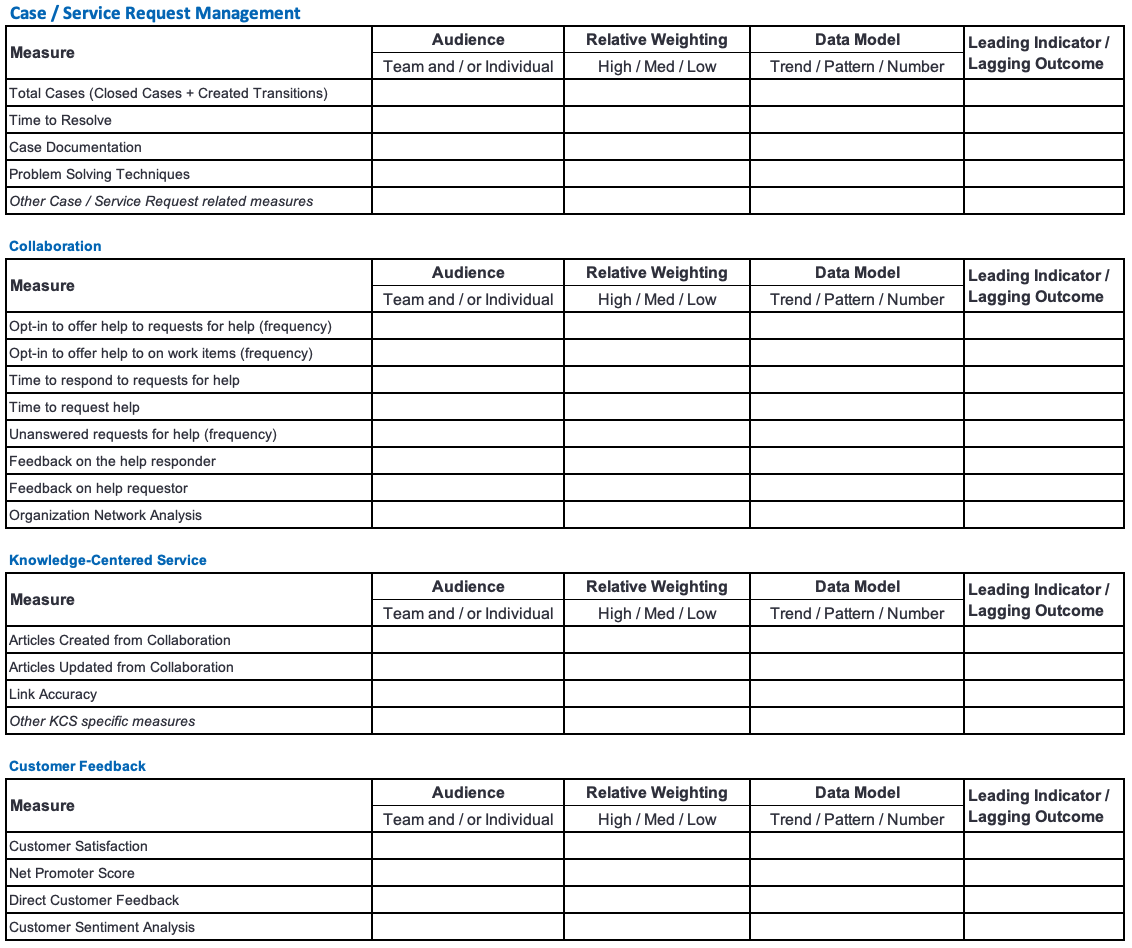

Over the last several years, techniques for measuring contribution have started to converge, but there is still quite a bit of experimentation happening as organizations broaden their perspective from the 'case' being the currency of support. Each environment may have more, fewer, or different measures.

Points to Triangulate

There are many dimensions that Member companies consider to help triangulate contribution of teams or individuals. Depending on the focus of a change initiative, the maturity in some practices, or new behaviors that are needed to reach success, the way these dimensions are used or communicated may shift.

Potential Points of Triangulation

- Case Management: Indicators of how an individual or team are managing the cases that they have ownership for

- Collaboration: Indicators of how an individual or team is collaborating to solve problems

- Problem Solving: Indicators of how an individual or team is using troubleshooting techniques

- Communication: Indicators of how an individual or team is communicating with others (internal and/or external)

- Channel Success: Indicators of how successful customers are in any number of engagement channels and/or how people are contributing to success in those channels.

- KCS Management: Indicators of how an individual or team is engaged with KCS Practices

- Customer Feedback: Indicators from the customer

- Organizational Learning: Indicators of the creation of T-shape people and closing knowledge gaps

Each of these dimensions then has any number of indicators, leading or lagging, that can be used to give a picture of what is happening.

Weighting Indicators

Not all activities may be equal in importance to contribution of success, and over time different activities may take on a different level of importance. For example, early in an Intelligent Swarming implementation, we may want to focus more on behaviors that encourage collaboration and shift how people think about Asking for Help and Offering to Help. Adding a weighting factor to the measure of an activity or outcome can provide appropriate emphasis.

Measures Template

The following template is pre-populated with some examples of measures that Consortium Member Companies are experimenting with to assess contribution. This is not intended to be 'the list' of measures, but rather a starting point to think through documenting and using measures.

Using Measures

How we use measures is more important than having measures, yet it seems we always want more measures without a plan for how we might use them. It is far too easy to get lost in the numbers themselves and lose sight of what the numbers mean. As always, be thoughtful about the consequences, both intentional and unintentional, that measures can have on an organization. As one senior executive put it, "If there is one thing we have learned at the Consortium, poor usage of measures is the best way to stop you from achieving desired outcomes."

Some questions to ask before focusing on a specific measure:

- Is the source of the data well understood and agreed to by all parties?

- Who is the intended audience of the measure?

- What is the intended use of the measure?

- If we see the measure change (trend, pattern, number) what is the action that is triggered?

- What behavior or culture shift is the measure intending to impact?

- How does the measure support the achievement of top level goals in the organization / company?

Assessment Resources

Kaplan and Norton's The Balanced Scorecard is an excellent framework for measures; it helps us think about measures from different perspectives. It also highlights the critical distinction of activity measures from outcome measures. It describes a number of important concepts that we have embraced in the Intelligent Swarming Practice of Recognize:

- Link individual goals to departmental and organizational goals to help people see how their performance is related to the bigger picture.

- Look at performance from multiple points of view. The typical scorecard considers the key stakeholders: customers, employees, and the business.

- Distinguish leading indicators (activities) from lagging indicators (outcomes).

It is highly recommenced that you review the Performance Assessment section in the KCS v6 Practices Guide (links below), where Consortium Members have developed foundational thinking about measures and value contribution that are very relevant to Intelligent Swarming.

Consortium Resources available to dive into metrics and measures:

- Measurement Matters v6: While this is written with KCS in mind, it offers a broad perspective, including a glossary of potential measures.

- Understanding Success by Channel: Ongoing work focused on how we measure and understand 'success' through different channels of engagement.