Progress Software: Application Support Services: KCS at Work

Improving Customer Support for Demanding Enterprises

Progress Software helps enterprises develop, integrate, and maintain enterprise applications using an open application framework and evolving programming models.

Tools Are Tempting, But No Panacea

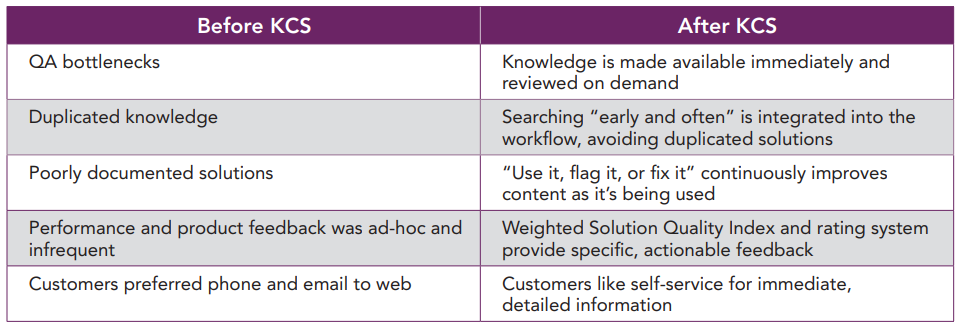

In 2002, Progress deployed a well-respected Knowledge Management (KM) tool. Their primary focus was capturing and sharing information to facilitate resolution. To reflect their processes and product complexity, they heavily customized the tool and relied on a Quality Assurance team to review and approve solutions.

However, they felt they were not getting the efficiencies and reuse that they had hoped for. First, they were struggling with a severe bottleneck in the creation and review process for approved “solutions.” The QA team was required to review 50 solutions per engineer per month. But the Progress engineers were creating so many solutions that out of 100 solutions, only 25 would be released, despite a QA group of up to 10 people.

Testing and verification were cumbersome as QA engineers often had to recreate the issue in the lab. Slow output dragged down customer and engineer satisfaction and reduced the incentive for engineers to create solutions or hunt for reusable ones.

Adding to the pressure, while some cases were closed quickly, not enough met the customer satisfaction closure goal of 1-2 days. Finally, the tool and its customizations caused error-inducing duplication.

Investing in People and Process

With these dissatisfiers in mind, the Progress management team decided to reassess their approach. Working with the Consortium for Service Innovation, they realized that they could apply Knowledge-Centered Support (KCS) practices to make their support centers more effective:

- Management emphasis on solution quality over quantity

- “Flag it or Fix It” demand-driven solution quality model

- KCS roles and responsibility licensing model

Step 1: New Performance Metrics - Quality, not Quantity

KCS practices require management commitment to sustain new processes and performance assessment tools. The first step for Progress was to realize that their emphasis on volume knowledge capture had resulted in duplications and poorly documented solutions. The management team decided to adapt incentive processes to emphasize quality over quantity and hold the engineers responsible for the knowledge they created and used.

To alleviate the solution review backlog, Progress started to publish to customers almost all solutions by default, or just-intime, but with a disclaimer: “Unverified. This indicates that the Solution has not been through any formal review process yet, but that it has been used successfully in resolving at least one real-life customer issue.” These unverified solutions, linked to cases, provide a trove of detail on issues and experiences. Engineers can now search and find more information much earlier in a resolution.

Having the knowledge base available online pleases customers. As a precursor to logging a problem, customers can verify their details, consider some obvious resolutions, see contextual information, and provide feedback on content accuracy and relevance.

Engineers are now rated and rewarded on the number of solutions that are reviewed, and on the subsequent rating of these solutions during use. For each case, an existing solution is linked in, or a new solution is created. Rating and commenting of reused solutions is required, although the rating can be changed later.

Progress Software

- Application frameworks for integrating cutting-edge technologies with legacy systems

- Consulting services to facilitate adoption

- 100 support staff serve over 5000 partners and end-user accounts

The Challenges

- High complexity of supporting multi-tier, multi-platform implementations

- Knowledge QA function created bottlenecks and solution redundancy

- Customized KM tools increased data entry and encouraged low quality entries

What They Did

- Changed management focus from content quantity to content quality

- Replaced QA role with inthe-workflow “flag it or fix it” process

- Changed roles and responsibilities to encourage knowledge capture throughout the support process

Key Benefits

- Increased engineer efficiency

- More cases resolved within 24 hours

- 60% of customers resolve cases with web self-service

The Results

- Improved customer satisfaction: faster resolution and online access to accurate, detailed information

- Better job performance: engineers get actionable feedback from customers and peers

- Higher quality knowledge: knowledge sampling and weighted ratings have helped drive solution quality and enable high reuse

Weighing Solution Quality

To make these ratings more meaningful and quantifiable, Progress created a weighted Solution Quality Index (SQI). The SQI guides feedback to a list of attributes that management wants to track and uses a multiplier of 1-5 to increase the weight of the criteria that are most meaningful. The highest weight is given to completeness, technical accuracy, clarity and usability, over less crucial but still important concerns like format and metadata. The SQI is used to formally assess engineers on an annual basis: pass or fail. If they fail, they get to be reassessed until they pass. Also, to help reinforce solution quality standards during certification, some solutions are randomly sampled and reviewed against the SQI.

Customer ratings are weighed with engineer ratings when assessing solution quality, and engineers see the comments on their solutions. This feedback loop helps drive knowledge base quality.

The Progress management team was proactive throughout adoption. Said Peter Holt, director of worldwide technical support, “To ensure our success with KCS, we started in Europe, then had European staff come and implement in our USA head office. This helped worldwide buy-in and avoided the ‘I’m from the head office, I’m here to help you’ syndrome. Secondly, we looked for early wins and regularly updated everyone. You need real stories here; this means sitting down with engineers and asking questions like ‘Has it surprised you?’, ‘Have you solved problems where before you could not?’ Finally, we held the course. Initially, we were creating many more solutions than we were reusing - at times this was painful overhead. However, reuse kept increasing until today it far outweighs the creation of new solutions.”

Step 2: Reuse is Review; “Use It, Flag It or Fix It” Made Part of the Culture

To maintain and improve the knowledge in the knowledge base, Progress has rigorously integrated “use it, flag it or fix it” in their workflow. This demand-driven KCS approach helps ensure QA time is invested where the need is greatest.

- Use it: Search early and often. If a solution fits the problem, use it, link it to the case, and rate it. If no solution is appropriate, start a new one.

- Flag it: If the solution seems problematic, the engineer can “flag” or mark and comment on the solution. Flagged solutions are used with care while the owner corrects them.

- Fix it: Minor issues like spelling or grammar are fixed on the fly.

Through the KCS certification process, engineers are equipped to understand the ramifications of their choices and annotations in the knowledge base. They contribute to its quality and utility on a daily basis and acquire a sense of ownership and responsibility for its accuracy.

As of 2005, Progress had 31,000 solutions in the database. Using the “flag it or fix it” model, they created almost 4400 new solutions in 2005, with about 1000 having been modified through the rating and “flag it or fix it” process. As of mid-2006, more than 11,000 of the solutions had been modified or flagged to be reviewed, showing that about one-third of the knowledge in the knowledge base was in active use.

New solution creation rates continue to drop as reuse increases. According to Holt, “I was over the moon (and thought we had peaked) when the solution reuse to creation rate was three to two. Today, we’re at a reuse factor of eight or nine to one.”

Step 3: New Roles - Certified Engineers, Coaches, and a Domain Manager

KCS practices increase the relevance and utility of information by capturing knowledge during case management and resolution, rather than after the case is closed. To make this real-time process easier to implement, KCS defines key roles, including pre-certified and certified engineers, coaches, and the Knowledge Domain Manager or Knowledge Champion.

Engineers receive KCS training to improve the accuracy and clarity of the knowledge as they write, review, publish, and use the knowledge in the knowledge base. Pre-certified engineers are those who have been trained but are not yet experienced enough to review and revise another engineer’s solutions.

The coaches, working support engineers who serve as part-time mentors, receive specialized influence and change management training to help them facilitate the team’s adoption and use of KCS. Progress has a 1:8 ratio of coaches to engineers.

The Knowledge Domain Manager (KDM) is the expert on the knowledge base and its architecture and can be the arbiter when solutions collide. At Progress, the KDM’s first project was to look at ways to enhance and reuse the existing 25,000 solutions. Commented KDM Haneet Laffin, “Part of our problem had been in using the power of the tools. It became my priority to use the KM tool to monitor search strings, solutions found and considered, and the case log, to understand the solution process. I was able to see when solutions were started, but abandoned. With this visibility, I was quickly able to fix, flag, or recommend new content based on solution demand.”

Next Steps

By creating an effective online knowledge center, with more than four times the number of verified solutions visible than before KCS, Progress now handles 14,000 questions on their online center each week, versus 500 per week on their phone lines. Of the customers who respond to their survey, 60% report that they can resolve their problems themselves online.

Progress wants to enhance the online customer experience to drive down costs and increase the immediacy of information. They are improving the user interface, making it more obvious and simple to perform effective searches. Also, every user comment on a solution is now being treated as a support incident, enabling follow-up by the knowledge owner.

Knowledge-Centered Support at Work

Progress Software’s experiences with knowledge management underscore the value of Knowledge-Centered Support. Their successes are based on strong execution of the following KCS practices:

- Capture in the Workflow. Progress redefined roles and provided training to ensure knowledge was created during each step of case resolution.

- Flag It or Fix It. All analysts take responsibility for the quality of the knowledge base content as they interact with it.

- Performance Assessment. Progress management now emphasizes quality and relevance in their metrics and reward systems.

- Leadership. Visible, ongoing commitment by management reinforced the message that KCS was a long-term standard for delivering support.

Case study developed by DB Kay & Associates (www.dbkay. com) for the Consortium for Service Innovation. © 2006 Consortium for Service Innovation. All Rights Reserved. Consortium for Service Innovation and the Consortium for Service Innovation logo are trademarks of Consortium for Service Innovation. All other company and product names are the property of their respective owners.